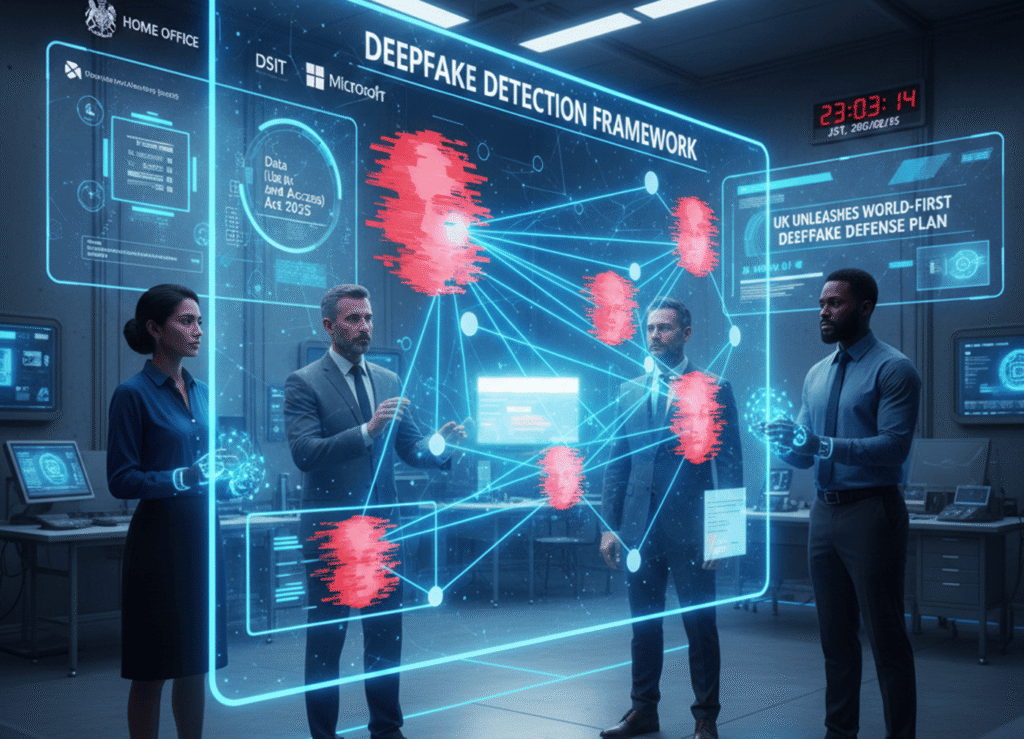

The UK Government has announced plans to develop what it describes as a “world-first” deepfake detection framework.

The aim is to curb the rapid spread of manipulated images and videos used for abuse, harassment, and criminal exploitation.

The initiative will be led by the Home Office and developed in collaboration with major technology companies, academic researchers, and technical experts.

Ministers say the framework will explore how emerging technologies can better recognise and assess deepfakes, while also setting clear expectations for how companies detect and respond to them. The announcement comes amid growing concern over the misuse of AI tools to generate sexualised images without consent, including of children.

Rising concern over deepfake abuse

Deepfakes have become increasingly accessible as generative AI tools grow more sophisticated. While the technology has legitimate uses in areas such as film, education, and accessibility, law enforcement agencies and campaigners warn that it is being exploited at scale by criminals.

Government figures suggest the scale of the problem has expanded dramatically. Officials estimate that up to eight million deepfake images were shared in 2025 alone, compared with around 500,000 in 2023. Much of the growth is believed to be driven by easily available AI tools that allow users to generate explicit or degrading images with minimal technical knowledge.

Victims’ advocates say the impact can be devastating, particularly where sexualised images are created and distributed without consent. Such material can be difficult to remove once shared online and can cause long-lasting psychological, professional, and reputational harm.

Government and industry collaboration

The Home Office said the new framework would be developed through close collaboration between government departments, technology firms, academics, and subject-matter experts. Microsoft is among the companies named as working with the Government on the initiative.

Officials say the aim is not only to improve detection technologies, but also to establish common standards that platforms are expected to meet. By clarifying industry responsibilities, ministers hope to close what they describe as loopholes that allow harmful content to spread unchecked.

Minister for safeguarding Jess Phillips said the impact of deepfake abuse cut across all demographics.

“The devastation of being deepfaked without consent or knowledge is unmatched, and I have experienced it first hand,” she said.

“For the first time, this framework will take the injustice faced by millions to seek out the tactics of vile criminals, and close loopholes to stop them in their tracks so they have nowhere to hide.

“Ultimately, it is time to hold the technology industry to account, and protect our public, who should not be living in fear.”

Regulatory pressure on tech platforms

The announcement follows heightened regulatory scrutiny of technology companies over their handling of AI-generated content. Earlier this week, the UK’s data regulator opened a formal investigation into X and its AI subsidiary xAI over compliance with UK law, after its chatbot Grok was used to generate sexual deepfake images without consent.

Ofcom had already launched a probe into the platform and its chatbot several weeks earlier. X has since said it has introduced measures to address the issues raised by regulators, though details of those changes have not been fully disclosed.

Elon Musk’s social media platform has faced mounting criticism following reports that its tools were being used to create sexualised images, including of minors. Campaigners argue that existing safeguards have been insufficient to prevent abuse.

International investigations intensify

Pressure on X is not limited to the UK. The company is also under investigation by the European Commission, and French prosecutors this week raided its offices in France as part of a preliminary inquiry. The investigation is examining alleged offences including the spread of child sexual abuse images and deepfakes.

The growing number of cross-border investigations reflects wider concern among governments that AI-generated abuse is outpacing existing laws and enforcement mechanisms. Regulators across Europe are increasingly focused on whether platforms are doing enough to prevent harm, particularly when tools are capable of generating illegal content at scale.

Law enforcement and public confidence

Police leaders have welcomed the Government’s proposed framework, saying it could strengthen their ability to respond to evolving threats. City of London Police Deputy Commissioner Nik Adams said clearer standards would help law enforcement keep pace with criminals who exploit new technologies.

Experts caution, however, that detection alone may not be enough. They argue that effective enforcement will also depend on swift takedown processes, stronger penalties for misuse, and international cooperation, given the global nature of online platforms.

London mayor Sir Sadiq Khan has warned that AI could usher in a new era of mass unemployment if governments, businesses, and regulators fail to act quickly to properly control the technology.