Meta has unveiled new AI chips, and they make one thing clear: the company wants to build more of the hardware behind its AI work itself. The new lineup shows Meta is expanding that effort in a bigger, more deliberate way.

In its update, Meta lays out four chips as part of a broader buildout inside the company. The release gives a clearer view of how the social media giant plans to support its growing AI needs across its platforms.

Meta’s next phase starts to show

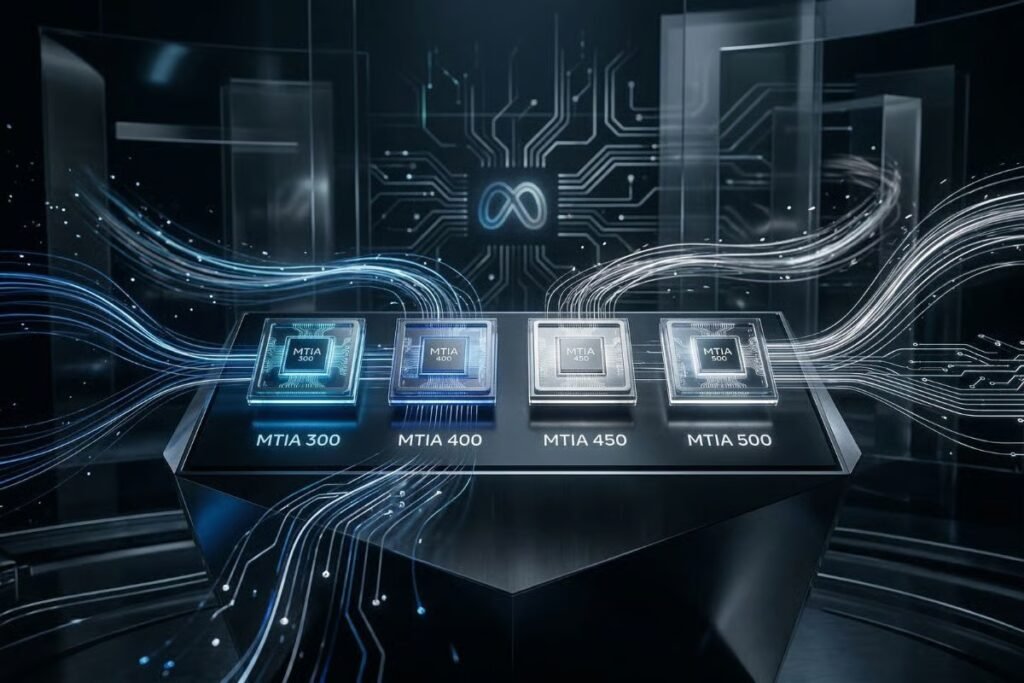

Meta is not presenting one chip and calling it progress. By naming MTIA 300, 400, 450, and 500 together, the company is showing how much of its in-house chip effort is now in motion.

Each chip marks a different step in that buildout:

- MTIA 300 laid the groundwork, starting with recommendation and ranking work and moving into training.

- MTIA 400 widened that scope to support newer generative AI workloads while still handling earlier ones.

- MTIA 450 was shaped more directly around running generative AI models.

- MTIA 500 pushes further in that same direction, with Meta framing it as another step up for generative AI inference

The rollout is not entirely in the future tense, either. Some of these chips are already deployed according to Meta, with others scheduled to arrive in the next stages of its buildout.

The company also puts unusual weight on timing. It says those four generations were developed in less than two years, giving the rollout a faster feel than a standard hardware update.

Cost, control, and a tighter grip

Meta is building these chips because buying AI hardware at scale is expensive, and relying too heavily on external suppliers leaves less room to shape that hardware to its own needs. Building more in-house could help the company keep AI costs in check.

It also gives Meta more say over how its systems are built for the work it cares about most. Off-the-shelf hardware can do the job, but custom chips give the company a chance to shape performance around its own products and priorities across Facebook, Instagram, WhatsApp, and the rest of its app family.

There is also a strategic angle to it. The more hardware Meta designs for itself, the less exposed it is to outside suppliers’ timelines, product decisions, and pricing. That gives the company a tighter grip on a part of the AI stack that is becoming more important to its business.

Meta does not present MTIA as a total replacement, though, and says it still plans to use a broader mix of internal and external chips.

Speed becomes part of the plan

Pace is part of the strategy now.

Meta says it can now produce a new chip about every six months, a much quicker pace than the long hardware cycles that used to define this kind of work.

That helps explain why this rollout feels so packed. The company describes a build process that keeps evolving from one generation to the next, using each round to respond faster to changing AI demands rather than waiting years for a major overhaul.

Reuse is a big part of that approach. By carrying over core parts of the setup from one generation to the next, Meta is trying to deploy new chips faster and with less friction.

The race to become APAC’s AI center of gravity is intensifying as Singapore and India scale their ecosystems.