With AI becoming more accessible, concerns are growing about the impact it could have on local democracy in Yorkshire and across the UK, as the technology is being used to create convincing but false political content.

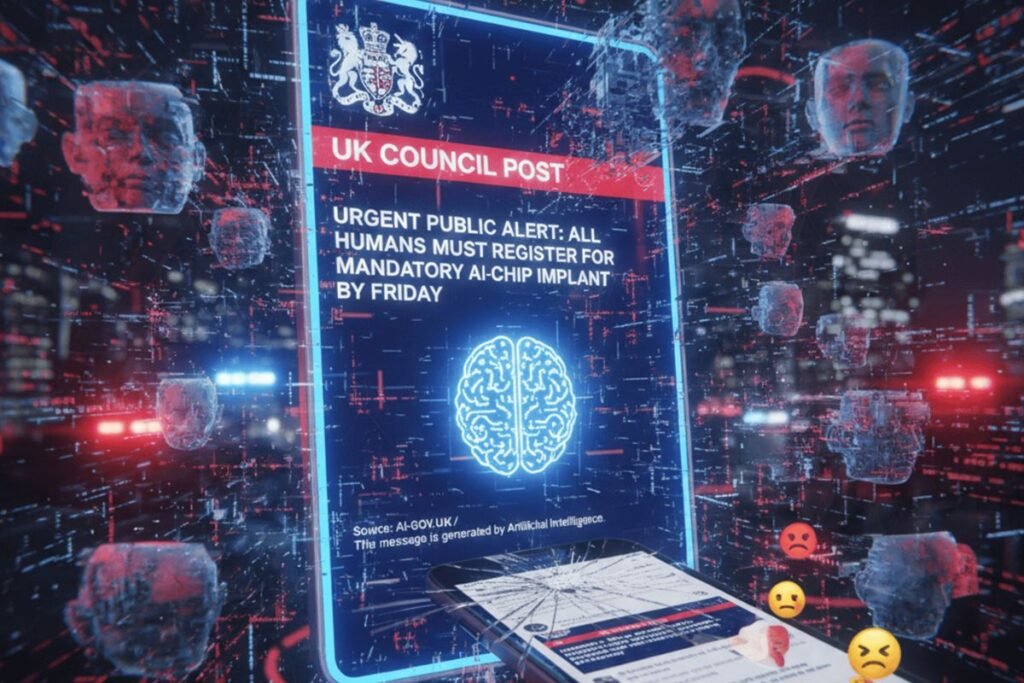

Recent fake social media posts posing as official council announcements have shown how easily AI-generated content can mislead the public, particularly in the run-up to elections.

Fake council posts spread online

The fabricated social media posts, which circulated widely online, appeared to come from City of York Council and promoted non-existent initiatives such as asking residents to house asylum seekers, recruiting volunteers to remove St George flags, or encouraging the public to fill potholes themselves.

At first glance, many of the images appeared convincing, with council-style branding, formal language, and realistic layouts that could easily pass for genuine during a quick scroll online.

According to reporting from the BBC, City of York Council confirmed the posts were fake, but by that point, they had already been shared thousands of times. Some reached accounts with hundreds of thousands of followers, meaning many people likely saw and believed the claims before any correction was issued.

Experts say this reflects a larger shift in how misinformation spreads online. AI tools have significantly lowered the skill and cost required to create realistic images, posters, and text, allowing users to produce misleading content quickly and at scale.

‘Pics or it didn’t happen’

Not so long ago, people online used to demand “photo evidence” in ways like this to validate a claim that an event occurred. But with AI enabling users to create quick, convincing content at the drop of a hat, the internet has become flooded with fraudulent photos and other forms of digital media aimed at leading viewers astray, making it harder to determine what’s real and what isn’t.

Thanks to AI, spreading misinformation online has become incredibly easy. Creating a convincing piece of fake media no longer requires advanced design skills or specialist software, users just need a laptop and a basic understanding of AI tools. Small details often give these images away, such as blurred logos or spelling mistakes, but those flaws are easy to miss when you’re skimming a social media feed.

Local council leaders warn that incidents like this are becoming increasingly challenging to manage and can have serious consequences. False claims linked to politically sensitive issues, particularly asylum and immigration, can damage social cohesion and trust in local authorities.

Experts say the real challenge is not simply correcting individual posts, but responding to the sheer volume and speed at which misleading content circulates online. And once misleading content gains traction, it becomes difficult to stop its spread. In some cases, councils have struggled to counter misinformation when content creators refuse to remove false posts because they generate online engagement and ad revenue.

A global pattern of AI-driven disinformation

What is happening in Yorkshire is part of a much broader global trend, with AI-generated and AI-manipulated content already being used to mislead voters and influence political debate.

In the US, AI-generated robocalls mimicking President Joe Biden’s voice were used during a primary election to discourage people from voting, prompting investigations and regulatory intervention. During recent election cycles, AI-generated images falsely depicting political candidates in controversial situations have also circulated widely on social media.

Similar patterns have been reported worldwide. In countries such as Taiwan, deepfake videos and synthetic audio clips have used the likenesses of political leaders to sway public opinion. Across Europe authorities have warned about coordinated disinformation campaigns using AI-altered images and videos during election periods. In Nigeria, manipulated audio recordings circulated during the 2023 election, falsely suggesting election interference and increasing political tensions.

Why AI makes misinformation harder to stop

Researchers studying disinformation say the key issue here is not whether every voter believes false content, but how repeated exposure creates confusion and doubt. Repeated exposure to misleading content can leave people unsure about what to believe, weakening confidence in democratic institutions.

AI accelerates this process by making false content faster and cheaper to produce, and easier to tailor to specific communities or local issues. Academics note that many people lack the time or motivation to verify what they see online, often sharing material that aligns with existing beliefs without checking its accuracy.

The future of politics in an AI era

As AI technology continues to advance and become more accessible, it is likely to play an even greater role in political campaigning and public debate. Used responsibly, it could offer opportunities to improve engagement and access to information, but its misuse could reshape election campaigns and public debate in troubling ways.

For the UK and other democracies, the challenge will be adapting to the AI wave through stronger digital literacy, more explicit rules for online platforms, and updated regulations, all without stifling free expression. The cost of producing misleading content may be low, but the long-term cost to democratic trust could be far higher.

Experts predict 2026 will bring less AI hype and more governance, delayed enterprise spending, AI moving into OT, smarter cyberattacks, and faster cooling tech.